MUMBAI - Artificial intelligence (AI) enthusiasts see the US$40 billion 2008 Marvel franchise movie Iron Man, differently. To them, it predicts not just future computing interfaces, AI, and AI outfitted robots, but what makes it all possible: cognitive computing. Iron Man main protagonist Tony Stark’s interactions with his two AI-robotic arms and digital assistant, Just A Rather Very Intelligent System (J.A.R.V.I.S), represent the Holy Grail of computing and AI.

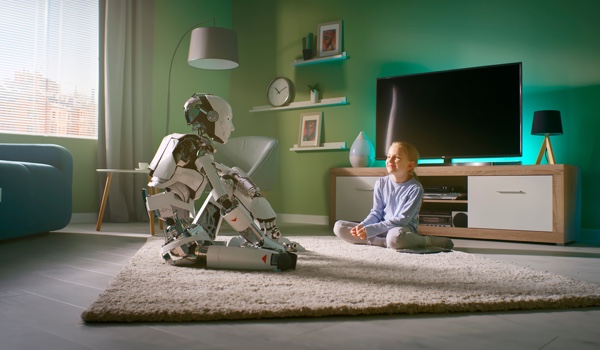

A 2019 Medscape survey1 found that almost half of all doctors from the United States were uncomfortable with AI. The sentiment of patients toward AI is even worse, the reason? People do not trust machines over humans. Yet, machines, from x-rays and magnetic resonance images (MRI) to robotic arms for precision surgery, have become indispensable in the healthcare sector. It thus begs the question: would doctors and patients think differently if the AI interactions were more humanlike, e.g., Tony Stark with J.A.R.V.I.S?

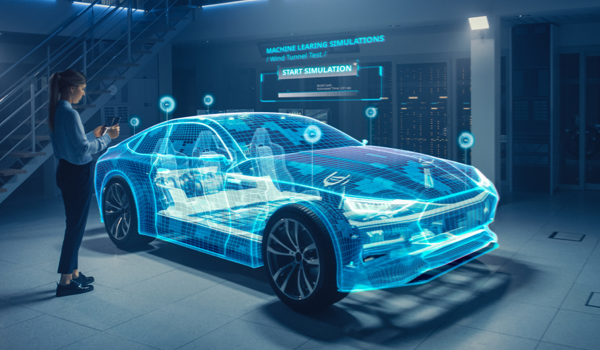

Cognitive machines are as old as human imagination, e.g., Talos is a giant guardian automaton from Greek mythology made of bronze. But for the first time in human history, we have the tools to make a machine: that tool is cognitive computing. Cognitive computing refers to a complex web of technologies: machine learning (ML), natural language processing, speech recognition, human to computer interaction, object recognition, the ability of AI to generate accurate narratives from complex findings, whose interactions give us a digital system that understands and interacts just like humans do with each other.

Cognitive Computing and AI

Computing, AI, and cognitive computing derived from the same conceptual base in the 1940s and 1950s. It can be argued that the ultimate purpose of computing visualized by early developers, such as UK sc

The content herein is subject to copyright by The Yuan. All rights reserved. The content of the services is owned or licensed to The Yuan. Such content from The Yuan may be shared and reprinted but must clearly identify The Yuan as its original source. Content from a third-party copyright holder identified in the copyright notice contained in such third party’s content appearing in The Yuan must likewise be clearly labeled as such. Continue with Linkedin

Continue with Linkedin

Continue with Google

Continue with Google

1467 views

1467 views