HEIDELBERG, GERMANY - We experience life through our brains. Thus, artificial intelligence (AI) enthusiasts dream about creating an autonomous thinking system that can perceive, reason, learn, plan, and act - an AI that mimics our brains - brain-like machine.

Machines can now do things that were once the sole domain of humans: beat the world's top chess players, drive cars, and translate between different languages. But it's not just the world of human versus machine. Computers also leverage neuroscientific research to empower computers to make more human-like decisions.

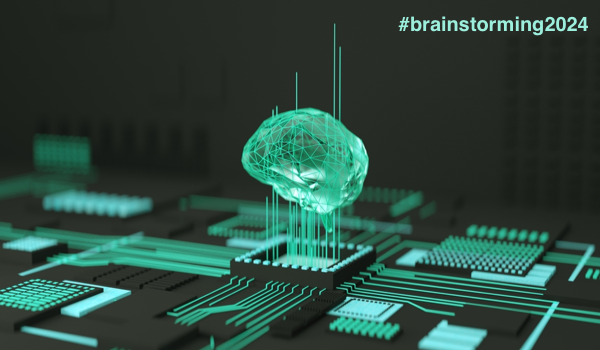

Neuroscience inspired the field of AI and continues to do so. In 1975, e.g., a Japanese scientist, Kunihiko Fukushima, introduced the neocognitron, the first convolutional neural network (CNN) architecture.1This type of neural network can give machines vision.

The CNN comprises many layers in computer vision. The images are in the first layer, convolving with weights. The output of the first layer goes into the next layer, where it gets different weights. This process then repeats with forward and backward propagation. The deeper the neural network, the larger the number of weights and computations it takes to run the network. Calculating the weights and optimizing them is how the artificial neural network (ANN) learns. This learning process is computationally intensive and requires a lot of storage space.

The learning process emulates the way biological neurons and synapses work to a high degree - at least theoretically. Biological neurons are slower, but they are energy efficient; therefore, neuromorphic engineering aims to mimic

The content herein is subject to copyright by The Yuan. All rights reserved. The content of the services is owned or licensed to The Yuan. Such content from The Yuan may be shared and reprinted but must clearly identify The Yuan as its original source. Content from a third-party copyright holder identified in the copyright notice contained in such third party’s content appearing in The Yuan must likewise be clearly labeled as such. Continue with Linkedin

Continue with Linkedin

Continue with Google

Continue with Google

2495 views

2495 views