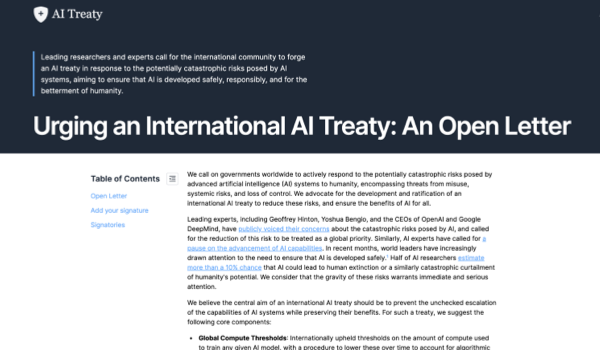

STOCKHOLM - A true revolution in the area of artificial intelligence (AI) is having a profound impact1 upon the world in a wide range of areas, affecting people’s lives on a daily basis. There are a number of pitfalls2 associated with the use of AI, in particular when there is no adequate global regulatory framework in place. Nevertheless, the possibility of accessing new technologies, and higher efficiency in a wide range of tasks holds the potential to help achieve a more sustainable world. One way to address this is through the framework of the Sustainable Development Goals (SDGs) proposed by the United Nations (UN).

The SDGs comprise the Sustainable Development Agenda of the UN for 2030. This agenda was adopted by all the UN member states in September 2015. Although this agreement does not involve any legal obligations, the various countries commit to develop adequate frameworks to ensure their achievement. The philosophy of the SDGs is based on a series of shared values to ensure peace and prosperity for everyone.

An example where AI can really help is in the context of SDG 3 - regarding good health and wellbeing. A number of studies have documented the possibility of using AI, more concretely deep neural networks (DNN), to help diagnose a wide range of diseases3, where perhaps the example of cancer detection4 is the most popular. This is certainly an area with significant positive potential, since massive amounts of data can be analyzed in a robust and consistent manner.

There are, however, problems connected with the lack of interpretability of deep learning (DL) models when deployed in medical applications. An interpretable model is characterized by the fact that, given the input data, it is possible to exactly understand how the output data (or predictions) of the model were obtained. DNN are based on adjusting a high numbe

The content herein is subject to copyright by The Yuan. All rights reserved. The content of the services is owned or licensed to The Yuan. Such content from The Yuan may be shared and reprinted but must clearly identify The Yuan as its original source. Content from a third-party copyright holder identified in the copyright notice contained in such third party’s content appearing in The Yuan must likewise be clearly labeled as such. Continue with Linkedin

Continue with Linkedin

Continue with Google

Continue with Google

1947 views

1947 views